🧠 Introduction

Snowflake has rapidly become the go-to cloud data platform thanks to its scalability, performance, and ease of use. As organizations continue to modernize their data infrastructure, Snowflake expertise is in high demand. If you’re preparing for a Snowflake Data Engineer or Developer interview in 2025, this guide compiles 50 essential questions and answers — covering concepts, architecture, security, and real-world scenarios.

🔹 1. What is Snowflake, and how is it different from traditional data warehouses?

Answer:

Snowflake is a fully-managed, cloud-native data platform designed specifically for data warehousing, analytics, and data sharing in a multicloud environment. Unlike traditional on-premise or legacy warehouses like Oracle, Teradata, or SQL Server, Snowflake was built from scratch to leverage the scalability, flexibility, and elasticity of cloud computing.

Key differentiators:

- Separation of compute and storage: Snowflake decouples compute and storage, allowing each to scale independently. This provides immense flexibility and cost control.

- Fully managed: No need for infrastructure management, indexing, or performance tuning — Snowflake takes care of all of it.

- Multi-cluster architecture: Supports automatic scaling for concurrent users via multi-cluster virtual warehouses.

- Support for structured and semi-structured data: JSON, Parquet, Avro, XML, and ORC can be stored and queried natively.

- Secure data sharing: Share live data across different Snowflake accounts or even with external consumers, without physically copying data.

🔹 2. Explain Snowflake’s architecture in detail.

Answer:

Snowflake has a multi-tier architecture designed for high concurrency, scalability, and availability. It consists of three primary layers:

- Storage Layer:

- All data (structured and semi-structured) is stored in compressed, columnar format in cloud object storage (AWS S3, Azure Blob, or GCP).

- Data is automatically divided into micro-partitions (approx. 16 MB in size) and each partition stores rich metadata for efficient pruning during queries.

- Compute Layer:

- Consists of Virtual Warehouses, which are clusters of compute resources that perform operations like loading data, running queries, and transformations.

- Multiple warehouses can run concurrently without contention, and they can scale vertically (bigger size) or horizontally (multi-cluster).

- Cloud Services Layer:

- Manages authentication, metadata, query parsing/optimization, access control, and infrastructure management.

- Acts as the brain of Snowflake, coordinating between compute and storage.

🔹 3. What are Virtual Warehouses in Snowflake?

Answer:

A Virtual Warehouse is a cluster of compute resources in Snowflake that provides the necessary processing power for operations like:

- Executing SQL queries

- Data loading/unloading

- Running transformations

Key features:

- Auto-suspend and resume: Helps save cost by automatically suspending when idle and resuming when a query is submitted.

- Concurrency handling: Multiple users or workloads can be isolated using different virtual warehouses.

- Scalability: You can increase or decrease the size (XS to 6XL) and even run multi-cluster warehouses for concurrent workloads.

Example:

An ETL job can run on a separate ETL_WH, while analysts query data using a BI_WH, both isolated from each other.

🔹 4. What are Micro-partitions in Snowflake? How do they help performance?

Answer:

Snowflake stores all table data in micro-partitions — internally optimized storage units of around 16MB (compressed). These are:

- Immutable

- Automatically managed

- Columnar in nature

Benefits:

- Metadata tracking: Each partition stores stats like min/max values for each column.

- Automatic pruning: During queries, only relevant partitions are scanned — others are skipped.

- No indexing required: Performance remains high even without manual indexing or partitions.

Use case:

A query filtering on country = ‘USA’ may only scan 3 out of 5000 micro-partitions, drastically reducing execution time.

🔹 5. What is Time Travel in Snowflake and how does it work?

Answer:

Time Travel allows users to access historical data in Snowflake — whether it’s a table or schema — as it existed at a previous point in time.

Key use cases:

- Accidental data deletion or modification recovery

- Comparative analysis or debugging

- Zero-copy cloning of tables/schemas at historical timestamps

Syntax examples:

SELECT * FROM orders AT (TIMESTAMP => ‘2025–05–01 12:00:00’);

Retention Period:

- 1 day by default

- Up to 90 days in Enterprise Edition

🔹 6. What is Fail-safe in Snowflake? How is it different from Time Travel?

Answer:

Fail-safe is a 7-day recovery period after Time Travel expires, during which Snowflake can recover historical data, but only through support.

Differences:

- Time Travel is user-accessible.

- Fail-safe is only available to Snowflake for disaster recovery.

Use Fail-safe as a last resort, not a backup strategy.

🔹 7. Explain Snowflake’s approach to handling semi-structured data.

Answer:

Snowflake supports semi-structured formats like JSON, Avro, Parquet, and XML via a native data type called VARIANT.

Steps:

- Load data as-is into VARIANT columns.

- Query using colon notation:

SELECT data:id::STRING FROM json_table;

- Use functions like FLATTEN() to normalize nested arrays.

Advantages:

- No schema rigidity.

- Schema-on-read approach.

- Optimized storage and querying, unlike traditional RDBMS.

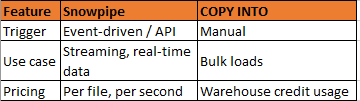

🔹 8. What is Snowpipe? How is it different from COPY INTO?

Answer:

Snowpipe is Snowflake’s serverless, auto-ingest feature for continuous data loading. It automatically loads files from external/internal stages as they arrive.

COPY INTO is for manual or scheduled batch loading.

Example: IoT sensor data can stream into Snowflake in near real-time using Snowpipe and an S3 event notification.

🔹 9. How does Snowflake support data sharing?

Answer:

Snowflake enables secure, live, and real-time data sharing without duplication via its Data Sharing feature.

You can:

- Share data between different Snowflake accounts (reader or consumer).

- Create shares containing tables/views.

- Consumers query the shared data directly, no copy needed.

Example:

A company shares financial data with an audit firm by creating a secure share, and the firm accesses it using a reader account.

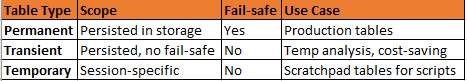

🔹 10. What are the types of tables in Snowflake?

Answer:

Differences:

- Transient tables save cost and are useful for intermediate or non-critical data.

- Temporary tables vanish once the session ends.

🔹 11. What is zero-copy cloning in Snowflake?

Answer:

Zero-copy cloning allows you to create a clone (copy) of a table, schema, or database instantly without duplicating the underlying data. It’s extremely useful for:

- Dev/Test environments

- Data backups

- Experimentation

Key points:

- Clones share the same micro-partitions as the original.

- Only changes (deltas) are stored after cloning.

- Saves cost and time since data isn’t copied.

Example:

CREATE TABLE cloned_orders CLONE original_orders;

🔹 12. What are stages in Snowflake?

Answer:

A stage is a location in Snowflake where data files are stored before being loaded into or unloaded from Snowflake tables.

Types of stages:

- Internal Stage: Managed by Snowflake (e.g., @%my_table or named stages like @my_stage)

- External Stage: Integrates with AWS S3, Azure Blob, or GCP buckets.

Use case: Load CSVs from S3 into Snowflake using COPY INTO via an external stage.

🔹 13. What is automatic clustering?

Answer:

Snowflake automatically handles micro-partitioning, but for large tables with frequent queries on non-natural keys, clustering can improve performance.

Automatic Clustering:

- Automatically reorganizes micro-partitions based on defined clustering keys.

- Runs in the background.

- Removes the need for manual clustering operations.

Syntax:

ALTER TABLE orders CLUSTER BY (customer_id);

🔹 14. How does Snowflake handle concurrency?

Answer:

Snowflake’s multi-cluster virtual warehouse architecture allows for concurrent processing without query queuing.

Concurrency solutions:

- Scaling up: Increase size of the virtual warehouse (more compute).

- Scaling out: Use multi-cluster warehouses to run multiple clusters in parallel.

Ideal for BI tools like Tableau, Power BI where many users run dashboards simultaneously.

🔹 15. What are Snowflake tasks?

Answer:

Tasks in Snowflake allow you to schedule and automate SQL statements, such as:

- Incremental loading

- ETL workflows

- Data transformations

Example:

CREATE TASK daily_sales_task

WAREHOUSE = etl_wh

SCHEDULE = ‘1 day’

AS

INSERT INTO daily_sales SELECT * FROM raw_sales;

Tasks can be chained to form DAGs (Directed Acyclic Graphs) for complex orchestration.

🔹 16. Explain materialized views in Snowflake.

Answer:

A materialized view is a precomputed view whose results are stored for fast access. Snowflake keeps it up to date automatically (asynchronously).

Use cases:

- Aggregated metrics

- Complex joins

- Repeated subqueries

Example:

CREATE MATERIALIZED VIEW total_sales_mv AS

SELECT region, SUM(amount) FROM sales GROUP BY region;

🔹 17. How do you secure data in Snowflake?

Answer:

Snowflake provides multiple layers of security:

- Encryption: All data encrypted in transit and at rest.

- Access control: Role-based access control (RBAC)

- Network security: IP allowlisting, private connectivity (e.g., AWS PrivateLink)

- Row-level security: Using secure views or row access policies.

- Column-level masking: Obscure sensitive fields like SSN, credit card number.

🔹 18. What is a stream in Snowflake?

Answer:

A stream tracks changes (CDC — Change Data Capture) to a table over time. It’s commonly used for incremental ETL.

Example:

CREATE OR REPLACE STREAM order_stream ON TABLE orders;

This lets you read only the inserted/updated/deleted rows since the last read.

🔹 19. What are roles and how is RBAC implemented?

Answer:

RBAC (Role-Based Access Control) in Snowflake allows you to grant access rights to roles, which are then assigned to users.

Hierarchy:

- SYSADMIN: Create objects

- SECURITYADMIN: Manage roles/users

- PUBLIC: Default role

Use GRANT and REVOKE statements to manage access.

🔹 20. What is the difference between UNION and UNION ALL?

Answer:

- UNION: Combines rows from two queries and removes duplicates.

- UNION ALL: Combines all rows and keeps duplicates.

Example:

SELECT city FROM customers

UNION ALL

SELECT city FROM suppliers;

🔹 31. What is the use of a masking policy in Snowflake?

Answer:

Masking policies allow column-level data masking based on user roles. Sensitive data like PII (e.g., SSNs, credit cards) can be conditionally hidden.

Example:

CREATE MASKING POLICY mask_email AS (val STRING)

RETURNS STRING ->

CASE

WHEN CURRENT_ROLE() IN (‘HR_ROLE’) THEN val

ELSE ‘********’

END;

🔹 32. Can Snowflake be deployed on-premise?

Answer:

No. Snowflake is a fully-managed cloud-native platform and is not available for on-premise deployment. It’s offered only on AWS, Azure, and GCP.

🔹 33. How does Snowflake ensure high availability?

Answer:

Snowflake separates compute and storage and runs on highly redundant cloud infrastructure. Features ensuring availability:

- Automatic failover between regions (Business Critical edition)

- Replication & failover for databases and shares

- Scalable multi-cluster architecture

🔹 34. How is semi-structured data handled in Snowflake?

Answer:

Snowflake natively supports semi-structured formats like JSON, Avro, XML, and Parquet using the VARIANT data type.

Example:

SELECT payload:customer.name::STRING FROM orders;

Snowflake automatically parses and optimizes querying using flattened metadata.

🔹 35. What is lateral flatten in Snowflake?

Answer:

LATERAL FLATTEN is used to normalize nested arrays in semi-structured data like JSON.

Example:

SELECT value:name, value:price

FROM products, LATERAL FLATTEN(input => products.details);

🔹 36. How to monitor query performance in Snowflake?

Answer:

Use the Query History UI or SQL views like SNOWFLAKE.ACCOUNT_USAGE.QUERY_HISTORY for:

- Duration

- Execution plan

- Blocked/waiting queries

- Warehouse usage

Also, enable Query Profiling to analyze steps like scan, join, sort, etc.

🔹 37. What is result set caching?

Answer:

When a user re-executes a query with the same syntax and data hasn’t changed, Snowflake returns results from the result cache, avoiding re-computation.

Result cache is valid for 24 hours and free (no compute charged).

🔹 38. How to manage cost optimization in Snowflake?

Answer:

Tips for cost control:

- Use auto-suspend and auto-resume for warehouses

- Size warehouses appropriately (not always XL)

- Monitor storage usage

- Use QUERY_HISTORY to identify expensive queries

- Enable materialized views for expensive computations

🔹 39. How do you audit user activity in Snowflake?

Answer:

- Use built-in views like:

- LOGIN_HISTORY

- QUERY_HISTORY

- ACCESS_HISTORY

- Integrate with Security Information and Event Management (SIEM) systems

- Use roles and grants wisely to avoid over-permissioning

🔹 40. What is the difference between a PIPE and a TASK?

Answer:

- PIPE: Automates data ingestion (e.g., Snowpipe for file-based loading).

- TASK: Automates SQL execution on a schedule (e.g., data transformation).

They can be chained: files land → PIPE loads → TASK transforms.

🔹 41. How does Snowflake pricing work?

Answer:

Snowflake uses a pay-per-use model:

- Storage: Charged per TB/month.

- Compute: Based on credits per second/minute used by virtual warehouses.

- Features: Some features like auto-clustering may cost extra credits.

🔹 42. What is external table in Snowflake?

Answer:

An external table lets you query data stored in external stages (like S3) without loading into Snowflake.

Useful for large-scale lakehouse architectures.

🔹 43. Explain the architecture of Snowflake.

Answer:

- Cloud Services Layer: Metadata, authentication, optimization.

- Compute Layer: Virtual Warehouses (scale-out compute).

- Storage Layer: Centralized, compressed columnar data.

This separation allows scalability, performance, and ease of management.

🔹 44. What are database replication and failover?

Answer:

Snowflake supports cross-region and cross-cloud replication for Business Critical and above editions.

You can manually or automatically failover to a secondary region in case of an outage.

🔹 45. How is indexing handled in Snowflake?

Answer:

Snowflake does not use traditional indexes. Instead, it relies on:

- Automatic metadata indexing

- Micro-partition pruning

- Columnar storage optimizations

You don’t need to manually manage indexes.

🔹 46. What’s the difference between stream and change tracking?

Answer:

- Stream: Tracks DML changes (insert/update/delete).

- Change Tracking: Lightweight metadata feature to track table modifications without storing actual changes.

Streams are better for ETL; change tracking for replication and CDC tools.

🔹 47. What is a warehouse cache?

Answer:

This cache stores recently accessed data files in local SSDs of the warehouse to improve performance for repeated queries.

It is warehouse-specific and resets when the warehouse is suspended.

🔹 48. Can you perform ACID operations in Snowflake?

Answer:

Yes. Snowflake is ACID-compliant, supporting transactions with BEGIN, COMMIT, and ROLLBACK.

Example:

BEGIN;

UPDATE accounts SET balance = balance — 100 WHERE id = 1;

UPDATE accounts SET balance = balance + 100 WHERE id = 2;

COMMIT;

🔹 49. What is a resource monitor?

Answer:

Resource monitors let you track and control credit usage. You can set thresholds to:

- Alert

- Suspend warehouses

- Abort queries

Example:

CREATE RESOURCE MONITOR my_monitor WITH CREDIT_QUOTA = 100;

🔹 50. Difference between clone and copy?

Answer:

- CLONE: Creates a zero-copy duplicate using metadata pointers.

- COPY INTO: Physically copies data from one object to another (useful for backups, exports).

🔚 Conclusion

Snowflake is rapidly becoming the backbone of cloud data warehousing. Whether you’re applying for a Data Engineer, Developer, or Architect role, knowing these questions can help you stand out. Keep building hands-on experience and mastering real-world scenarios — that’s where the real confidence comes from.

🙏 If you found this article helpful and want to support my work:

🔔 Follow me for more insights: LinkedIn | Medium |Blogger

No comments:

Post a Comment